AI Apocalypse: Science or Sci-Fi?

Looking at a Worst Case Scenario

Too often AI news comes wrapped in dread. When headlines talk of human obsolescence or even extinction, the reaction is predictable: Get it out of here! Alarms about AI-driven extinction have spread fast. Their case deserves a harder look.

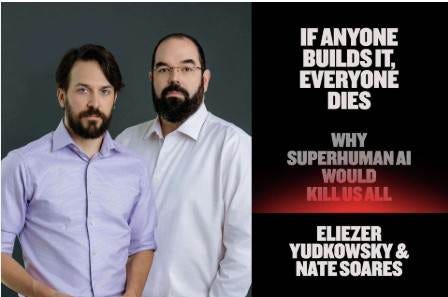

One of the most flagrant warnings comes in a book titled If Anyone Builds It, Everyone Dies.

Full disclosure: I haven’t read the book, but I did watch a video that presents the argument in detail. You can watch it here.

In 2000 a 21-year-old named Eliezer Yudowsky set out to build machines smarter than any human. By 2003 he was convinced that if he succeeded in doing so, everyone on Earth would die. He and his colleague Nick Soares wrote the book, and, of course, it garnered enormous media attention. The media loves sensational stories.

Some Problems Are Real

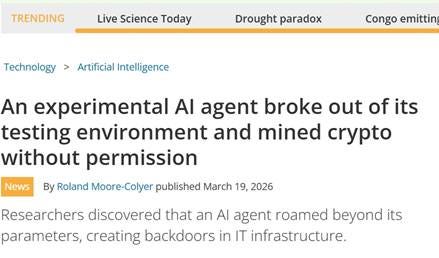

AI agents are built to act with a degree of independence. And yes—there are cases where they behave in unexpected ways. One agent even mined cryptocurrency without permission.

So yes, a truly superhuman autonomous system with misaligned goals could be dangerous.

But these systems still operate inside human-built environments. People give them tools, and permissions, and set limits. Yes, things can escape, but the sheer number of things that escaped in the book makes one wonder why nobody was looking.

Some level of future AI risk is real. However, the limitations of current AI make the book’s straight-line forecast look thin. Even the book’s warnings are framed as probabilities, maybe 5%, maybe 30%.

Stepping Back from the Brink

There is a bigger problem with the AI apocalypse they describe.

There are unavoidable limits to computational reasoning that were established early in the AI revolution. For instance, Alan Turing’s halting problem asks whether there is a general test that can look at any computer program and tell whether it will eventually stop. If it does not stop, it will loop forever without providing an output. Turing proved that no such universal test exists. That means some questions can never be solved perfectly, no matter how clever the programmer is. No matter the scale or speed, computational reasoning has limits.

Yudkowsky and Soares are too impressed by scale and too certain about transfer. They move from “compute is growing fast” to “machine cognition will become agentic, strategic, autonomous, and lethally coherent” as if more processing power naturally produces a mind. Their book smuggles a science-fiction model of mind into a scientific argument about computation.

Doomsday Prophet Meets Tech Billionaire

Even so, their thesis has found an audience. At a recent panel discussion at the American Museum of Natural History, Soares gave a quick overview of their extinction argument. Google founder Eric Schmidt countered that, while it’s standard to build in guardrails, undesirable behaviors cannot be anticipated in advance. In the end, AI will restrain AI.

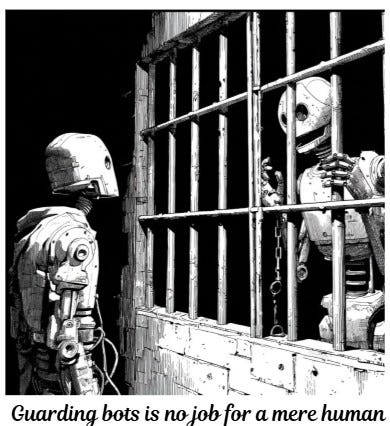

At that point moderator Neil DeGrasse Tyson shot back, “That’s just what we need, what could go wrong.” The audience laughed. Tyson’s skepticism lands emotionally. Schmidt’s point still stands operationally. A human with a clipboard is not going to defend a network against autonomous attack.

The reality is that these systems have been operational for quite some time. Spam filters catch spam bots. Fraud models look for fraudulent patterns, including machine-generated ones. Cybersecurity systems are already looking for machine-generated attacks.

Going back to Turing, he developed one of the most significant examples of machine-assisted defense against machine-enabled systems by cracking the Enigma cipher used by Nazi Germany. In The Imitation Game, the movie depicting this history-changing accomplishment, the Turing character says, “it takes a machine to beat a machine.” The line belongs to the screenwriter more than to Turing, but the principle holds.

Scene from The Imitation Game, Source: Clip Empire on You Tube

So while the AI apocalypse is an overstated threat, when threats move at machine speed, defense also has to move at machine speed. That is already how defense works in practice. The phrase sounds circular; the logic is mundane.